Week 3 / Responsible consumers make responsible creators

FaceApp went viral. Its privacy concerns also did. Why is this a perfect time to educate people to think responsibly and how might we do so?

On my way home last week, I got a message — a FaceApp-ed picture of my dad — from my him as I was reading the New York Times’ article that questions about the app's violation of user privacy. Maybe it's because both things happened at the same moment, the contrast really struck me. On the one hand, people are having fun visualizing their other selves. On the other, people are running around worrying about the future of data that is collected. The latter group claims that we should be learning from the Cambridge Analytica incident and implies that FaceApp’s lax terms and conditions is an irresponsible act.

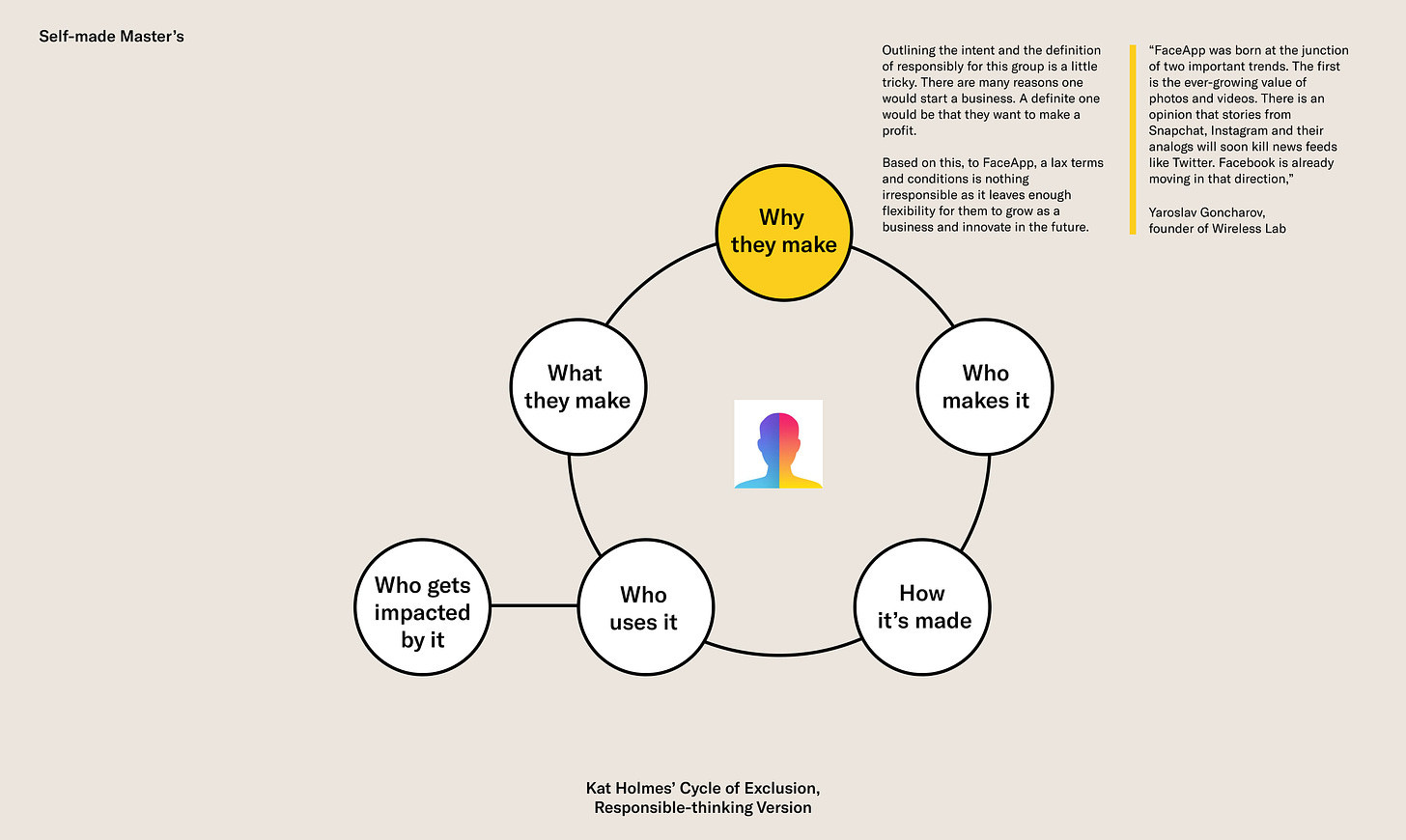

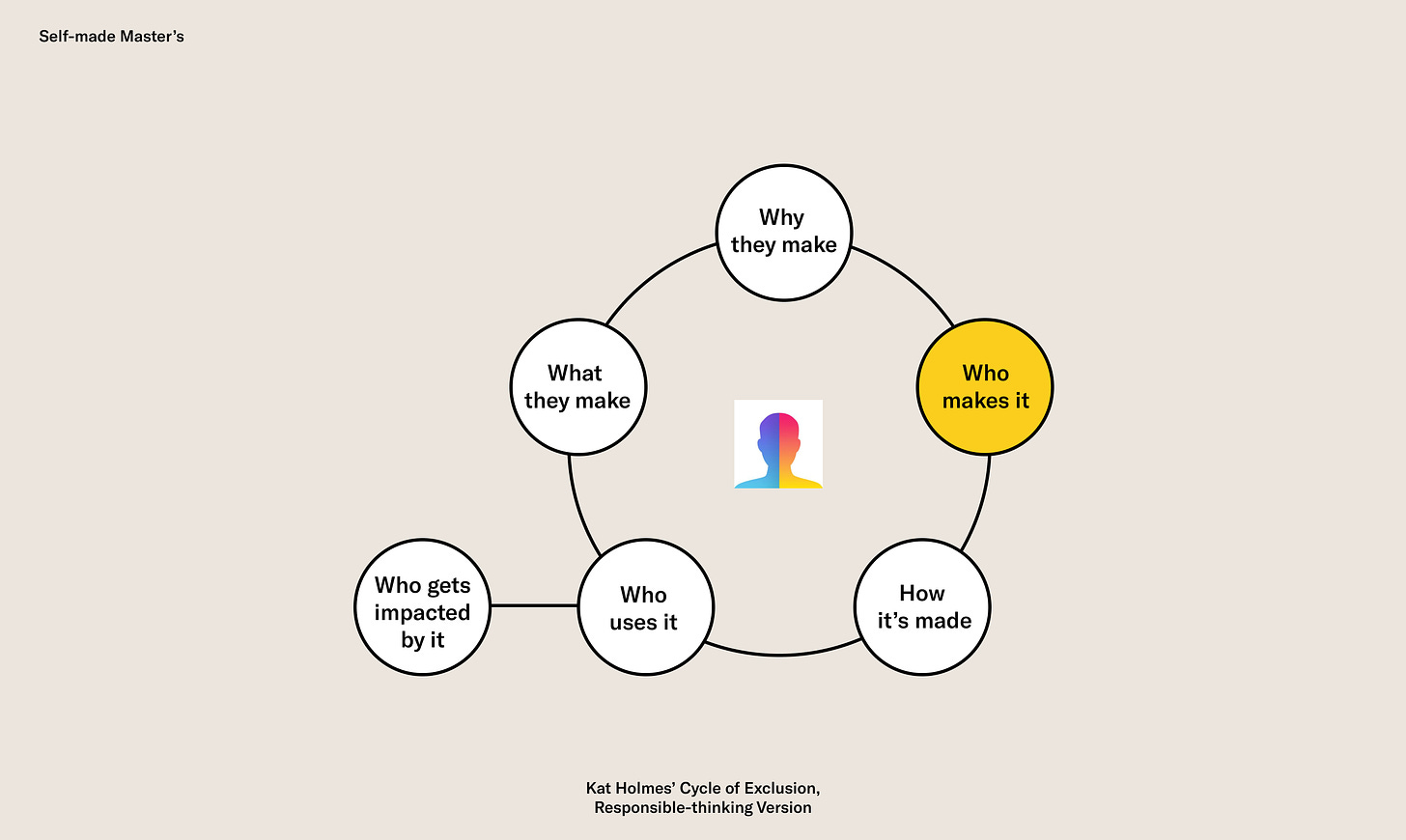

This week, I explored responsible-thinking by unpacking FaceApp privacy concerns. I adopted and adapted Kat Holmes' Cycle of Exclusion framework to offer a holistic view of the product, which I believe is crucial for responsible-thinking education. My hypothesis is that if people are educated to think responsibly as consumers, they will also apply the same responsible minds when they bring products or services to the world as creators.

Analyzing

what I have been digging into...

The current phase of this case is very interesting. Wireless Lab, the company behind FaceApp, hasn't "done something bad" with the data, there is technically nothing for the company to be responsible of yet. In addition, the types of data that are being collected by FaceApp and how they could be used is actually pretty similar to those of other photo-editing apps like Google's Snapseed. What spurred a lot of anxiety, especially in the US and EU, is that the Wireless Labs is a Russian-based company and a similar incident happened previously. I think this — when no one knows what is going to happen next — is a perfect time to educate people to think responsibly.

Experimenting

with new possibilities...

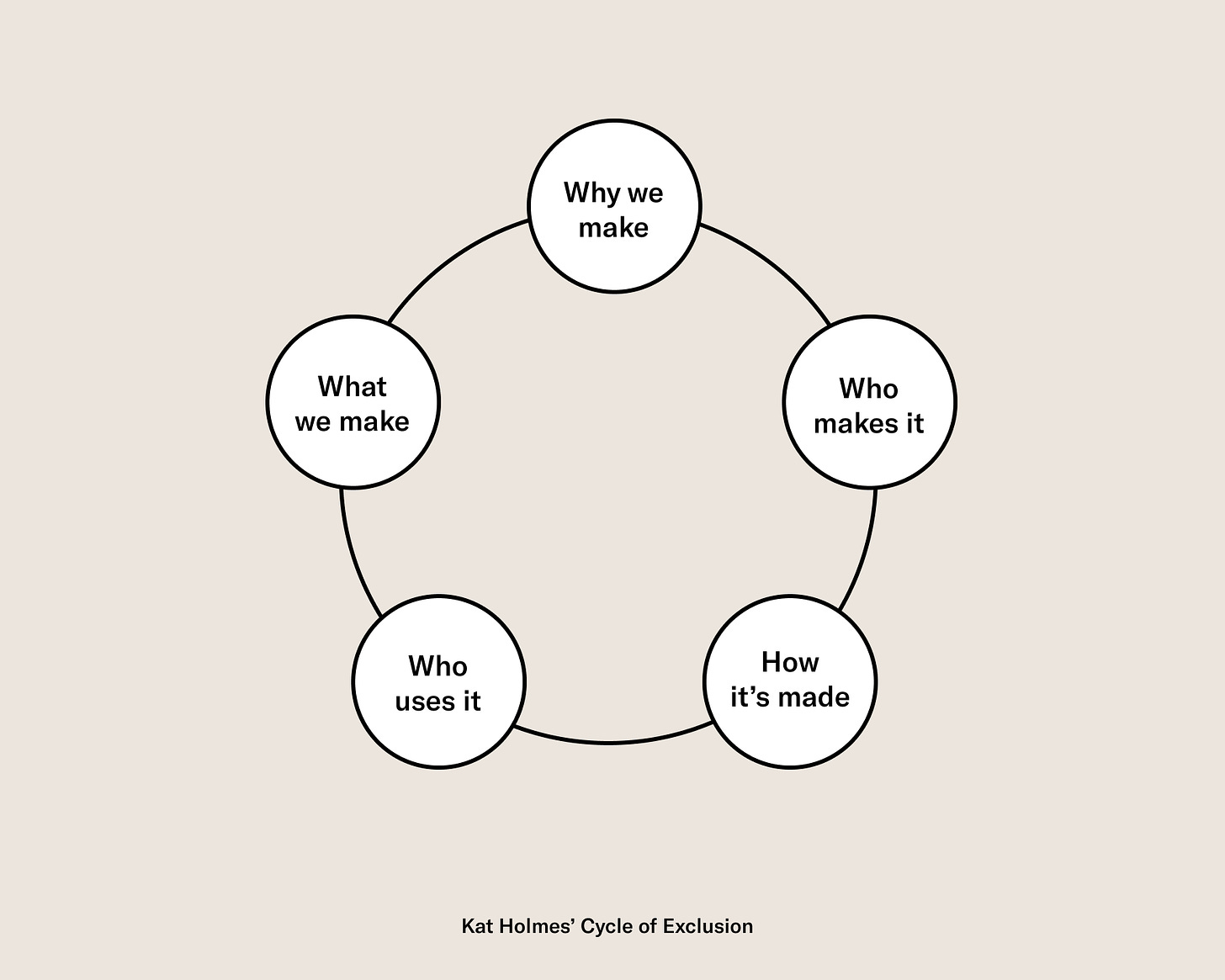

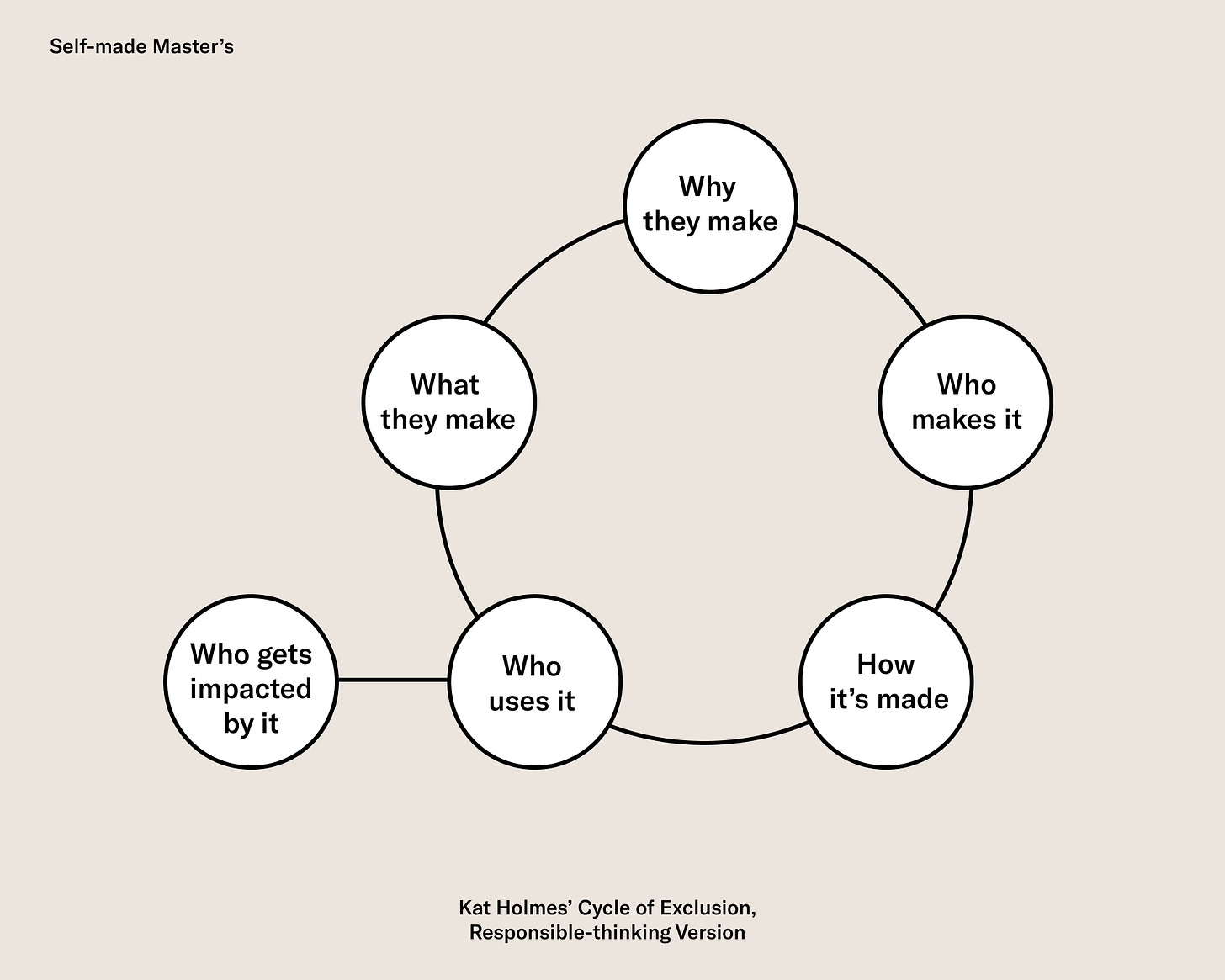

As highlighted in the previous weeks' experiments (see week 1 and week 2), a key to educate people to think more responsibly is to enable them to be aware and empathize with diverse perspectives. Through a deep understanding of diverse perspectives, people can better weigh trade-offs, prioritize, and shape their sense of responsibility. Although primarily used to examine how we perpetuate mismatched designs, Kat Holmes' Cycle of Exclusion framework is powerful in getting a holistic picture of the potential impact of a product.

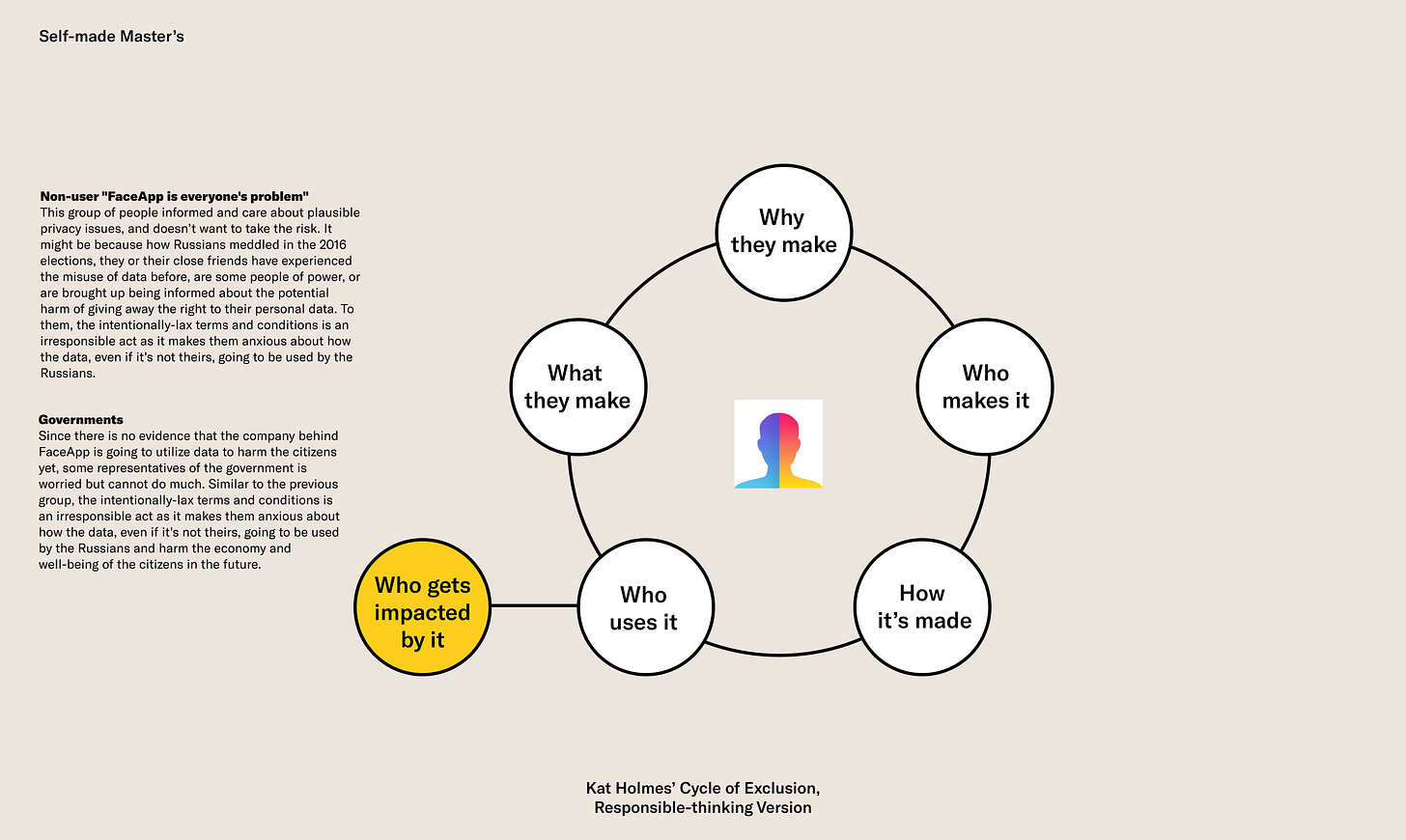

Because the framework will be used to analyze from the consumers' (third-person) perspective, "why we make" becomes "why they make" and "what we make" becomes "what they make." In addition to the five components, I'm introducing "who gets impacted by it." It refers to non-users who get impacted as a result of others using the product.

Why they make

Who makes it

How it's made

In addition to how it's made, I will also outline what data FaceApp collects and how might Wireless Lab, the startup behind the app, utilize the data based on its terms and conditions.

By accepting the product's terms and conditions, users grant the right for the company “to use, reproduce, modify, adapt, publish, translate, create derivative works from, distribute, publicly perform and display your User Content and any name, username or likeness provided in connection with User Content in all media formats and channels now known or later developed" as well as "to transfer information that [they] collect about [user], including personal information across borders and from [user] country or jurisdiction to other countries or jurisdictions around the world.” In short, users allow companies to do almost whatever they want with the data. The lax terms and conditions is what fueled the anxiety.

Who uses it

Who gets impacted by it

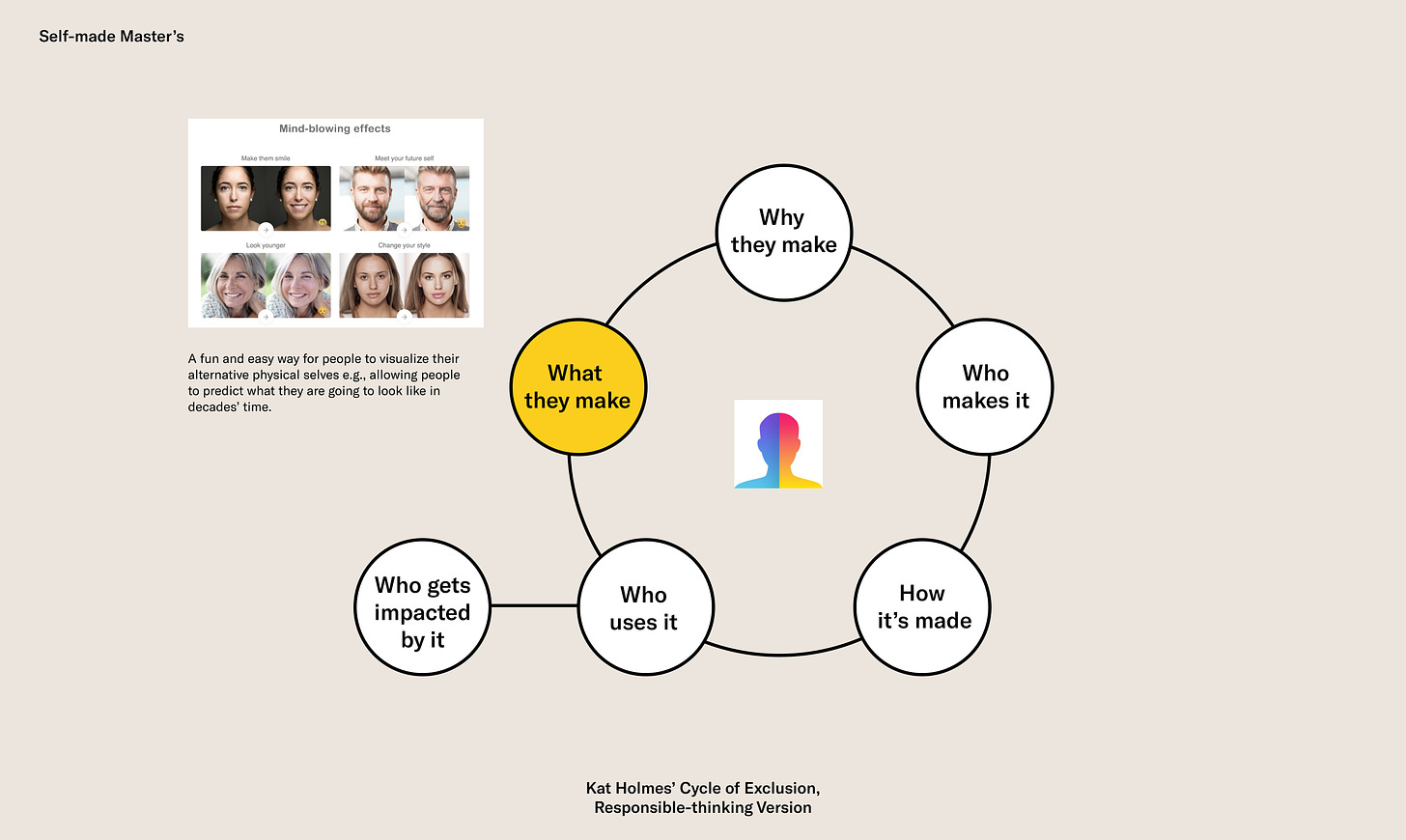

What they make

Here are the key insights I got from looking at the case holistically:

How FaceApp went viral shows that there are many people who fall under the "don't know and don't care about privacy issues" buckets. This reflects a failure of the traditional education to train people to educate themselves to evaluate their potential risks. With that being said, the articles and videos that came out do not only reflect the level of anxiety but also offer a more accessible alternative education, enabled by the technology we have today, about the potential harm.

The most significant trade-offs for protecting people's privacy are economic factors like the rate of innovation and economic growth. Let's say that the government imposed stricter privacy regulations that don't allow companies to collect data that could potentially "harm" people. Companies might lose their opportunities to innovate, which would slow down the growth. However, constraints could also bring a different form of innovation that might generate more growth. There is no absolute right and wrong, which makes responsibility hard to judge, thus hard to educate.

The majority of people are both consumers and creators. By educating people to be responsible for their own actions as consumers, they will also create responsibly.

“Up until now, education has been about improving individuals. What education should be about in the future is improving the world, and having individuals improve in that process.”

— Marc Prensky

I believe that educating people to think and act more responsibly is one of the means to improve not only the world but also our individual well-being. When we can think more responsibly, we can better evaluate the risks involved in our decisions against our values and are prepared to take responsibility for our decisions in the midst of uncertainties.

Sourcing

for your unique perspectives...

For this week, I would love to hear your thoughts on frameworks you use to evaluate consumption decisions (big and small). I am also curious to hear about what your definitions of "being responsible" is. Reply or send me a Twitter dm to share thoughts.

Thanks for making it this far!

Happy self-made,

Mind

*All views are my own